Enterprise Reference Architecture for AI Applications at Scale¶

Estimated time to read: 38 minutes

Executive summary¶

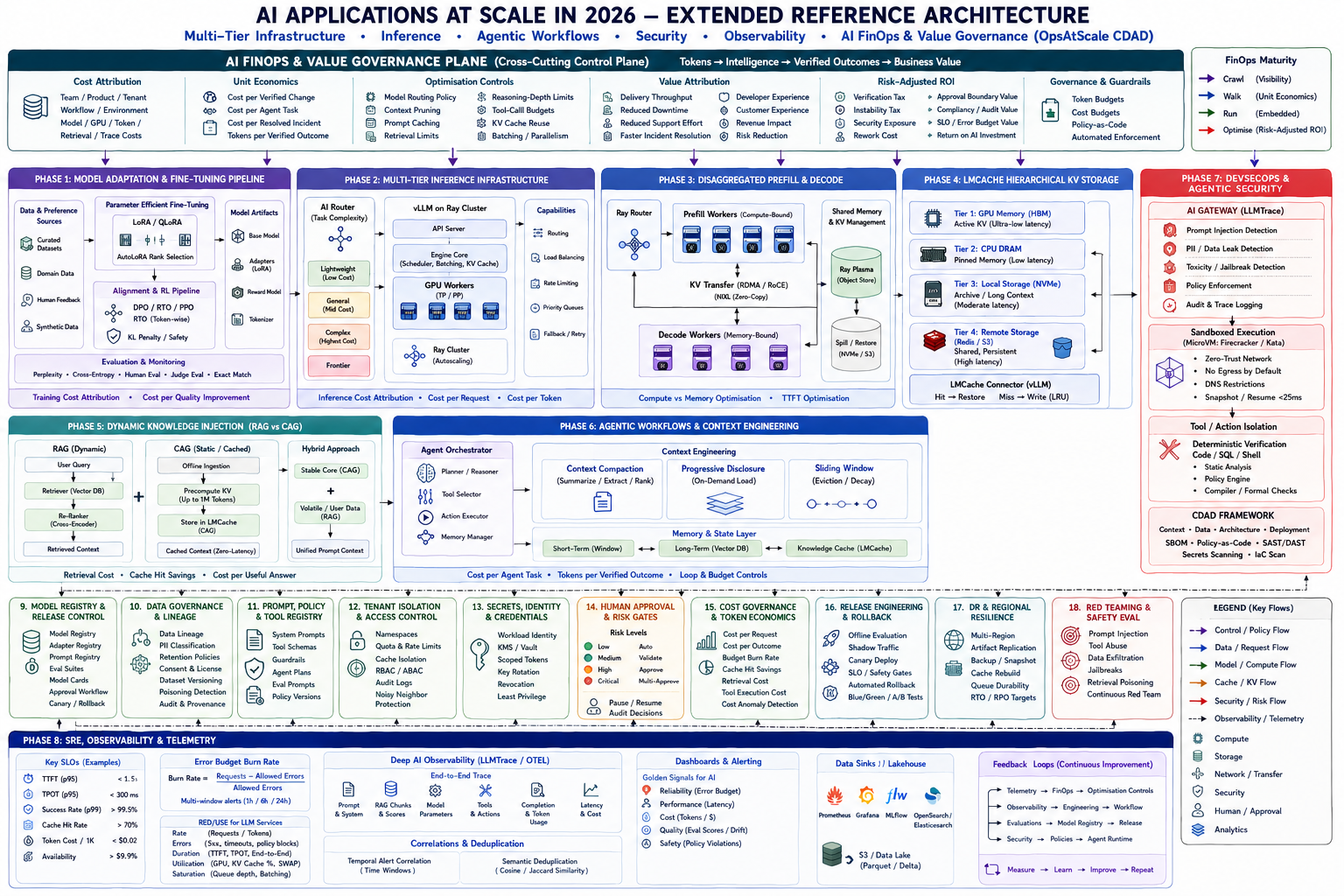

This reference document turns the final architecture into an implementable enterprise blueprint for 2026. The core design conclusion is that scaled AI platforms are no longer “one model behind one API”. They are multi-plane systems: a model-adaptation plane, a distributed inference plane, a KV-cache and memory plane, a knowledge plane, an agentic execution plane, a security and policy plane, an observability plane, and a value-governance plane. The most successful designs separate these concerns explicitly so they can scale, fail, and be governed independently. That separation is consistent with the engineering patterns documented by vLLM, Ray, LMCache, MLflow, OpenTelemetry, the NIST AI RMF, the NCSC secure AI guidance, and the FinOps Foundation’s AI guidance.

The report’s primary architectural recommendation is to default to smaller or mid-sized domain-tuned models with adapter-based post-training, then use progressive routing to reserve frontier models for only those requests that genuinely require their capability. That recommendation follows directly from the economics and operating characteristics described in the LoRA and QLoRA papers, the current Ray Serve and vLLM deployment guidance, and the FinOps Foundation’s emphasis on inference efficiency and cost-per-outcome rather than raw usage.

For inference, the reference state is vLLM-based serving with PagedAttention, continuous batching, tensor and pipeline parallelism where required, prefix-aware routing where workloads are prefix-heavy, and prefill/decode disaggregation once latency variance or GPU contention becomes material. Where context reuse is substantial, KV cache should be treated as enterprise infrastructure rather than as ephemeral GPU state. That implies LMCache-style hierarchical storage, cache-aware routing, and explicit capacity policies for GPU HBM, CPU memory, local NVMe, and shared remote cache or object tiers.

For dynamic knowledge, the most implementable pattern is not “RAG everywhere”. It is a hybrid: use retrieval for fast-changing or unbounded corpora, and use cache-augmented or prompt-cached context for bounded, stable, frequently reused “golden knowledge”. Long-context evidence also shows that larger context windows do not remove the need for context discipline; attention quality and retrieval quality still matter. This is why this document treats CDAD as an operational discipline: keep durable knowledge outside the active prompt, load only the minimal verified context required for the next step, and compact aggressively after each tool boundary.

Security must be designed as a hard boundary around agentic execution, not as a moderation add-on. The NIST AI RMF, the NCSC secure AI guidelines, CISA’s Secure by Design guidance, MITRE ATLAS, and recent NIST work on agent hijacking all point to the same operating model: threat-model the entire AI lifecycle, isolate high-risk tool execution, verify outputs deterministically before actuation, maintain auditable lineage and provenance, and red-team continuously. For high-risk code and tool execution, microVM-backed isolation provides materially stronger workload isolation than ordinary shared-kernel containers.

Finally, AI FinOps must be built into the architecture rather than bolted on afterwards. Research from Google Cloud DORA research and guidance from FinOps Foundation show that AI amplifies existing delivery systems. If routing, review, testing, and release systems are weak, AI increases throughput and instability at the same time. The correct measurement unit is therefore not token volume. It is token, model, and GPU spend per verified outcome, combined with verification burden, instability cost, and business value attribution.

Reference architecture¶

The reference architecture below is a synthesis of the primary papers and official implementation guides used in this report. It separates the runtime into a request plane, model-serving plane, memory and knowledge plane, agent-execution plane, and shared control planes for identity, policy, observability, and value governance. That separation follows the modular patterns documented by Ray Serve, vLLM, LMCache, MLflow, OpenTelemetry, NIST, and the FinOps Foundation.

flowchart LR

U[Users / Apps / Channels] --> G[AI Gateway]

G --> R[Capability and Cost Router]

R --> O[Agent Orchestrator and Workflow Engine]

R --> V[vLLM / Ray Serve Inference Cluster]

subgraph Serving Plane

V --> P[Prefill Workers]

V --> D[Decode Workers]

P <--> K[KV Cache Plane]

D <--> K

K --> L1[GPU HBM]

K --> L2[CPU DRAM]

K --> L3[Local NVMe]

K --> L4[Shared Remote Cache / Object Store]

end

subgraph Knowledge Plane

O --> RET[Retrieval Services]

RET --> VDB[Vector / Hybrid Index]

O --> CAG[Golden Knowledge Cache]

CAG --> K

end

subgraph Adaptation Plane

T[Training and Post-Training] --> MR[Model Registry]

T --> PR[Prompt and Tool Registry]

MR --> V

PR --> O

end

subgraph Agent Execution Plane

O --> S[Sandboxed Tool Workers]

S --> SYS[Enterprise Systems / APIs]

end

subgraph Shared Control Planes

ID[Identity, Secrets, Tenant Policy]

POL[Policy Engine and Approval Gates]

OBS[Tracing, Metrics, Logs, Evals]

FIN[AI FinOps and Value Governance]

LIN[Data Lineage and Audit]

end

G --- ID

O --- POL

S --- POL

V --- OBS

O --- OBS

G --- FIN

O --- FIN

V --- FIN

T --- LIN

O --- LIN

V --- LINThe control-plane relationships matter as much as the data flow. A model registry without prompt versioning, lineage, policy and cost association is insufficient for production AI; the same is true for tracing without identity context or budget ownership. The entity relationship below shows the minimum viable governance linkage for enterprise operation.

erDiagram

TENANT ||--o{ WORKLOAD : owns

TENANT ||--o{ BUDGET : has

WORKLOAD ||--o{ MODEL_DEPLOYMENT : uses

WORKLOAD ||--o{ PROMPT_VERSION : references

WORKLOAD ||--o{ TOOL_POLICY : constrained_by

MODEL_REGISTRY ||--o{ MODEL_VERSION : contains

MODEL_VERSION ||--o{ MODEL_DEPLOYMENT : promoted_to

PROMPT_REGISTRY ||--o{ PROMPT_VERSION : contains

DATASET ||--o{ LINEAGE_RUN : feeds

LINEAGE_RUN ||--o{ MODEL_VERSION : produces

REQUEST_TRACE ||--o{ EVAL_RESULT : evaluated_by

REQUEST_TRACE ||--o{ COST_EVENT : generates

REQUEST_TRACE ||--o{ POLICY_DECISION : checked_by

BUDGET ||--o{ COST_EVENT : limits

IDENTITY ||--o{ REQUEST_TRACE : attributed_to

SECRET ||--o{ TOOL_POLICY : authorisesA practical routing policy should be explicit, codeable, and reviewable. The table below is a recommended baseline.

| Workload class | Default execution path | Promotion trigger | Cost-control goal |

|---|---|---|---|

| Deterministic transformation, classification, policy checks | Rules, SQL, search, or classical service first | Only promote to LLM if rule coverage is insufficient | Avoid paying for cognition you do not need |

| Stable enterprise knowledge, repeated prompts | Pre-cached context or prompt/KV cache reuse | Promote to RAG only when freshness or corpus breadth requires it | Maximise cache hit rate and avoid repeated prefill |

| Factual lookup over changing corpora | Hybrid retrieval with re-ranking + bounded generation | Promote to larger model only if answer confidence or judge score is low | Bound retrieval and context cost |

| Workflow planning and tool use | Mid-tier model + CDAD packet + sandboxed tools | Promote to frontier model on repeated failure, ambiguity, or high-risk domain | Control cost per successful task |

| Safety-critical or externally acting agent step | Mandatory approval gate or deterministic verifier before actuation | Never bypass approval for privileged actions | Minimise downstream failure cost |

This routing model is derived from DORA’s “AI as amplifier” findings, FinOps guidance on inference efficiency and cost-per-outcome, Ray Serve routing patterns, and the operational distinction between cache reuse, retrieval, and tool-based automation.

Training and serving phases¶

Model adaptation and fine-tuning¶

Phase 1 establishes the model portfolio and adaptation pipeline. The purpose is not simply to improve benchmark scores; it is to create deployable models with known data lineage, stable behaviour, bounded serving costs, and promotion criteria that can survive release engineering and compliance review. LoRA remains the foundational adapter pattern because it reduces trainable parameters by injecting low-rank matrices into frozen base weights, while QLoRA keeps the base model quantised during fine-tuning so teams can adapt large models with materially lower memory requirements.

Responsibilities. The platform team owns the training substrate, experiment tracking, registry integration, and promotion gates. Applied AI teams own task data curation, eval design, and adapter objectives. Security and compliance teams approve data use, retention, and release predicates. The output of the phase is not “a model checkpoint”; it is a promoted artefact bundle containing the base model reference, adapter version, training dataset lineage, eval results, safety findings, and deployment metadata. This packaging model aligns with MLflow Model Registry and dataset tracking, OpenLineage, SLSA provenance, and NIST’s emphasis on TEVV throughout the lifecycle.

Required components. A complete implementation needs a model registry, experiment tracker, dataset versioning and lineage, prompt and tool registries, offline eval harness, safety eval harness, adapter storage, and a release policy engine. MLflow already provides model registry, prompt registry, tracing, evaluation, and dataset tracking primitives that map well onto this architecture.

Adaptation choices. Use LoRA for the normal case, QLoRA when GPU memory is the constraining factor, and multi-LoRA serving if you need many domain or tenant adapters on one shared base model. AutoLoRA is better treated as an optimisation option in the adaptation lab rather than a platform default, because its value is greatest when teams are already running disciplined offline experiments. DPO is the recommended first production preference-optimisation method because it directly optimises preferences without an explicit reward model or RL loop, while PPO-based RLHF remains useful when you need richer policy optimisation and can absorb the extra engineering complexity. Token-level methods such as RTO are best kept behind research gates until they show clear wins on your own eval pack.

Data flow and interfaces. Training data and synthetic preference data enter through approved dataset sources; each run emits lineage, metrics, and artefacts; successful candidates are registered with immutable version identifiers and mutable aliases such as staging and champion. Prompt versions should be versioned separately from model versions so prompt changes are auditable and reversible without rebuilding the underlying model.

Security controls. Training data must be licensed, retained, and lineage-tracked before any run starts. Third-party models and serialised weights should be treated as untrusted dependencies and scanned or sandboxed before use. NCSC guidance explicitly recommends supply-chain due diligence, scanning, isolation for third-party model artefacts, and secure life-cycle documentation.

SRE and SLO implications. The main service SLO for this phase is promotion reliability, not request latency. Recommended internal SLOs are: registry availability ≥99.9%; eval pipeline completion within agreed batch windows; and zero unauthorised promotions. For quality, define release gates such as “no model promoted unless offline task metrics, structured-output conformance, safety evals, and regression deltas all pass”. NIST TEVV and OpenAI’s eval guidance both support making evaluation a release gate rather than a one-off lab exercise.

Observability and cost attribution. Attribute cost at the run, dataset, model family, and team level. Training efficiency should be tracked as total tuning cost versus task metric lift; inference efficiency should be projected at this stage using expected token, context, and adapter routing profiles. The FinOps Foundation explicitly calls out training efficiency, inference efficiency, token consumption efficiency, ROI templates, and strategic outcome alignment for AI workloads.

Failure modes and mitigations. The dominant failure modes are data leakage, weak lineage, benchmark overfitting, reward misspecification, and adapter sprawl. Mitigate them by mandatory dataset provenance, held-out evals, promotion aliases, adapter retirement policies, and canarying new model/prompt bundles before wide rollout. Canary release remains the safest default for materially new behaviour.

Operational checklist. - Register every base model, adapter, prompt family, and dataset before promotion. - Require offline functional, safety, and structured-output evals for every release candidate. - Separate immutable artefact versions from mutable deployment aliases. - Record full lineage from source dataset to production deployment. - Treat RTO-style token-level optimisation as experimental until proven in controlled evaluation.

Multi-tier inference¶

Phase 2 is the production serving substrate. The purpose is to provide a stable, scalable inference fabric that decouples HTTP ingress, scheduling, GPU execution, and deployment policy, while retaining an OpenAI-compatible or equivalent application interface. vLLM is the recommended serving core because PagedAttention reduces KV-cache waste, and its serving stack supports continuous batching, distributed inference, prefix caching, quantisation, and adapter-based deployment patterns. Ray Serve is the recommended orchestration layer when you need autoscaling, placement control, programmable routing, and production-grade distributed serving workflows.

Responsibilities. Platform engineering owns cluster topology, runtime images, placement and scaling policies, request routing, and deployment updates. SRE owns canaries, rollback, burn-rate alerting, and capacity management. AI engineering owns engine flags, model configs, quantisation choices, and request-shaping. This is intentionally a separate concern from the agent layer.

Required components. The minimum production stack is: gateway, router, Ray Serve application, vLLM engine workers, model registry integration, autoscaling, tracing, budget tagging, and deployment observability. For larger models, use tensor parallelism when the model does not fit on a single GPU but still fits cleanly across GPUs within a node; add pipeline parallelism when the model must be split across nodes or where NVLink-class interconnects are absent and PP provides lower communication overhead. vLLM’s own guidance is to set tensor-parallel size to GPUs per node and pipeline-parallel size to nodes for typical multi-node deployments.

Data flow and interfaces. Requests enter via the gateway, are labelled with tenant, policy, and budget metadata, hit a capability-cost router, then are sent to a deployment with explicit model, adapter, context, and sampling parameters. The response path must return not only the completion, but also trace identifiers, token usage, router decision metadata, and any tool-call envelopes needed by the orchestrator. MLflow AI Gateway and OpenTelemetry-compatible tracing patterns fit this interface model well.

Security controls. Production serving should run with workload identity, short-lived credentials, secret isolation, tenant-aware quotas, and policy checks on model, tool, and context selection. SPIFFE/SPIRE-style workload identity and ABAC-style authorisation are a strong fit because authorisation decisions can include workload, tenant, model class, environment, and action attributes rather than only roles.

SRE and SLO implications. Core serving SLOs should include availability, Time to First Token, inter-token latency, non-2xx rate, saturation, autoscale lag, and queue-age bounds. The two most operationally useful latency indicators are TTFT and p95 ITL because they separate prefill pain from decode pain. vLLM, Ray Serve, and LMflow tracing all expose or support the instrumentation necessary to track these directly.

Observability and cost attribution. Attribute each request to model, adapter, tenant, product, environment, and workflow. This is the minimum metadata needed later for FinOps, incident analysis, and release correlation. Cost should include provider spend, GPU runtime, network egress, and review or retry burden where relevant. The FinOps Foundation specifically notes that AI cost allocation is harder because architectures are multi-tier and consumers of shared model outputs can differ across modules and workflows.

Failure modes and mitigations. Common failures are placement mismatch, poor GPU packing, queue blowups, hot-spotting to large models, unbounded retries, and silent quality regressions during rollouts. Mitigate them with conservative autoscaling floors on critical paths, canary rollouts, request-class routing rules, bounded retries, and rapid rollback by model/prompt alias rather than image rebuild.

Operational checklist. - Keep model, prompt, and deployment versions independently reversible. - Use TP first inside a node; add PP when node memory or interconnect limits require it. - Emit TTFT, ITL, queue age, token counts, and router decision metadata on every request. - Route by task class and business value, not by developer preference. - Make canary rollback automatic on SLO or error-budget breach.

Disaggregated prefill, decode and KV cache¶

Phase 3 addresses the operational mismatch between prompt prefill and token decode. Ray’s prefill/decode disaggregation guidance is clear: separating prefill from decode allows independent optimisation, reduces interference between the two phases, and supports independent scaling. This matters because prefill is comparatively compute-heavy while decode is dominated by weight and KV movement, so mixing both on the same GPU pool creates avoidable contention.

Responsibilities. Platform teams own the worker-pool split and network topology. AI infra teams own chunked prefill, cache transfer settings, scheduling, and decode-worker memory tuning. SRE owns phase-specific telemetry, especially TTFT, ITL, GPU memory pressure, and network transfer statistics.

Required components. You need separate worker groups, a hand-off protocol for KV segments, engine support for chunked prefill, and a transport path that does not thrash host memory. LMCache’s report documents the practical need for efficient KV movement across GPU, CPU, storage, and network layers, and explicitly frames prefill/decode disaggregation as a first-class workload.

Data flow and interfaces. The ingress router sends initial requests to prefill workers. Prefill workers tokenise and process the prompt, create KV pages or segments, and hand those segments to the decode side. Decode workers attach the incoming KV state, continue generation, and stream response tokens. The phase boundary should export transfer size, transfer duration, chunk count, decode attach latency, and subsequent TTFT delta, because these are the metrics you need to decide whether the disaggregation is helping or hurting.

Security controls. KV state is sensitive because it encodes user input, system prompts, retrieved documents, and sometimes tool outputs. Treat it as regulated runtime state: encrypt traffic in transit, isolate pools by tenant or trust domain where required, redact or hash cache keys where possible, and enforce cache-retention limits. NIST and NCSC both emphasise secure handling of data and AI system outputs throughout the lifecycle, not merely at rest in traditional databases.

SRE and SLO implications. Prefill/decode disaggregation should only be enabled when it improves one of three things materially: TTFT, throughput, or cost per useful token. It adds another failure surface, so you should measure p95 and p99 transfer stalls, attach failures, and cache-corruption or cache-miss fallbacks. If transfer overhead dominates, revert to co-located serving for that traffic class.

Observability and cost attribution. Attribute network transfer cost, decode-worker idle time, and prefill-worker saturation separately. Without phase-level attribution, the organisation often misdiagnoses the issue as “the model is slow” when the actual problem is cross-worker transfer, burst imbalance, or poor prompt shaping.

Failure modes and mitigations. The main failure modes are transfer underutilisation for small chunks, KV-format mismatches across engine versions, and phase imbalance that starves one pool and stalls the other. LMCache’s report highlights precisely these compatibility and I/O-efficiency issues. Mitigate them with version pinning, chunk sizing policies, backpressure, and canaries when changing engine versions or page formats.

Operational checklist. - Enable disaggregation only for traffic classes that show measurable TTFT or cost benefit. - Emit transfer-size and attach-latency metrics at the same level as TTFT and ITL. - Version-control KV page format assumptions with the engine build. - Separate cache-retention policy from serving-policy code. - Keep a fast rollback path to co-located prefill/decode.

LMCache multi-tier storage¶

Phase 4 turns KV cache into managed infrastructure. The best current open design is LMCache, which exposes KV caches through a control API, supports cache offloading and prefill/decode disaggregation, and allows orchestration across GPU, CPU, storage, and network layers. Its technical report also reports up to 15× throughput improvement in some workloads when combined with vLLM, although those gains should be treated as workload-dependent rather than guaranteed.

A practical enterprise tiering model is shown below.

| Tier | Medium | Role | When to use |

|---|---|---|---|

| Hot | GPU HBM | Live working set for active forward passes | Live decode, active shared prefixes |

| Warm | CPU DRAM / pinned memory | Fast spill and reuse buffer | Burst control, recent prefixes, offload buffer |

| Cool | Local NVMe | High-capacity local persistence | Long prompts, transient session state, cost optimisation |

| Shared | Remote cache / object store | Cross-node and cross-session reuse | Shared enterprise context, fleet-level reuse |

This hierarchy reflects the LMCache report’s documented movement and orchestration across GPU, CPU, storage, and network layers, alongside Ray’s object-spilling patterns for local overflow management.

Responsibilities. AI infra owns cache topology, cache index design, object layout, compression or eviction policy, and connector compatibility. Platform engineering owns storage classes, remote durability, and access control. SRE owns hit rate, restore latency, spill rates, and correctness fallbacks. FinOps owns the cost trade-off between HBM, RAM, NVMe, and remote tiers.

Data flow and interfaces. On request arrival, the serving tier queries the cache index for prefix or context matches. On a hit, the required KV chunks are restored or attached from the highest available tier. On a miss, the system computes prefill, writes cache segments asynchronously, and emits index metadata so future requests or workers can reuse it. Request routing should be cache-aware where workloads are prefix-heavy; Ray’s prefix-aware router exists exactly for this reason.

Security controls. KV caches are not “just performance data”. They can embed sensitive enterprise content. Therefore, shared tiers require tenant scoping, encryption at rest, expiry policies, access logging, and an explicit policy for whether cache reuse is allowed across users, teams, or legal entities. NIST’s trustworthiness characteristics and NCSC’s secure deployment guidance both support treating these derived artefacts as governed data.

SRE and SLO implications. The key SLOs here are cache hit rate by class, restore latency by tier, spill rate, and degraded-mode success when cache services fail. If the cache plane fails, serving must degrade safely to recompute rather than fail permanently. That means recompute fallback is not optional; it is a resilience requirement.

Observability and cost attribution. Cost must be attributed at least by tier, because HBM savings achieved by aggressive remote spill can be erased by excessive restore traffic. Track: saved prefill tokens, remote-restore bytes, spill/restore throughput, hit ratio by namespace, and cache value density, meaning “saved milliseconds or tokens per stored GiB”. Recommendation: rank cache namespaces quarterly and evict those that consume storage without producing measurable saved compute or lower TTFT.

Failure modes and mitigations. The major risks are stale or incorrect cache keying, cache poisoning, excessive spill latency, and index bloat. Mitigate with deterministic key construction, per-tenant namespaces, TTLs, integrity metadata, and hit/miss audits sampled against full recompute.

Operational checklist. - Treat every cache namespace as data with an owner, TTL, and legal boundary. - Make recompute fallback the default failure mode. - Measure saved prefill as a first-class benefit, not just raw hit percentage. - Keep tier economics visible to FinOps and SRE, not just AI infra. - Audit cache-key correctness against periodic recompute samples.

Retrieval versus cache-augmented knowledge¶

Phase 5 governs how runtime knowledge reaches the model. The correct enterprise answer is hybrid, not dogmatic. RAG is the right default for unbounded or frequently changing corpora because it retrieves relevant external documents at query time. CAG-style or prompt-cached approaches are attractive when the corpus is bounded, stable, and reused frequently enough that pre-computation or prefix reuse materially lowers latency and cost. The original RAG paper, subsequent CAG work, and current prefix/prompt-caching implementations all support this distinction.

| Dimension | RAG | CAG / prompt-cached context |

|---|---|---|

| Corpus volatility | Best for changing data | Best for stable data |

| Corpus size | Works with very large corpora | Bounded by usable context and cache strategy |

| Latency | Retrieval + prompt build + prefill | Lower when cache reuse is high |

| System complexity | Higher due to indexing and re-ranking | Lower if corpus is stable |

| Refresh model | Incremental index updates | Cache rebuild or invalidation required |

The long-context evidence matters here. “More tokens” is not a substitute for disciplined context design; lost-in-the-middle effects and general long-context degradation mean teams still need intelligent selection, ordering, and compaction.

Responsibilities. Data platform teams own ingestion, chunking, metadata, and retention. Search teams own indexing quality, retrieval latency, and hybrid relevance. AI teams own query construction, re-ranking, grounding strategy, and answer evaluation. Governance owns access policy, source provenance, and refresh SLAs.

Required components. For RAG: document pipeline, hybrid index, metadata filters, re-ranker, grounding format, and source provenance. For CAG-like knowledge: versioned golden corpora, cache build jobs, invalidation policy, and runtime association between cache namespace and model/prompt version. For both: traceable source IDs and evaluation on grounding quality.

Data flow and interfaces. RAG flow: ingest → parse → chunk → index → retrieve → re-rank → prompt build → infer → cite and log source IDs. CAG flow: approve stable corpus → build cache/prefix artefact → register artefact → attach at request time → infer → record cache namespace and version. The same user-facing API can support both paths if the orchestrator knows which context mode was selected.

Security controls. Document-level ACLs must be enforced before retrieval and before cache construction. Never build cross-tenant golden caches unless the underlying corpus is genuinely shared and legally approved to be shared. Provenance should be recorded at source- and chunk-level for retrieval, and at corpus-version level for pre-cached knowledge.

SRE and SLO implications. RAG SLOs should include retrieval latency, retrieval precision, re-ranker latency, grounding rate, and answer-support rate; CAG SLOs should include cache build time, cache attach latency, invalidation lag, and stale-answer rate. Recommendation: do not compare RAG and CAG on latency alone; compare cost and quality for each knowledge class.

Observability and cost attribution. Attribute vector-store, re-ranker, embedding, and retrieval costs separately from generation cost. For pre-cached knowledge, attribute build cost and amortised cache value across the workloads that use it. This is necessary to avoid the common error of underestimating RAG cost and overestimating the expense of cache preparation.

Failure modes and mitigations. RAG fails through poor chunking, stale indexes, ACL leaks, irrelevant retrieval, and citation gaps. CAG fails through staleness, under-scoped invalidation, and over-large static prefixes. Mitigate with metadata filters, answer-support evals, freshness tags, bounded context windows, and explicit rebuild schedules.

Operational checklist. - Classify each knowledge domain by volatility, size, reuse, and access boundary. - Use RAG for fresh or unbounded corpora; use CAG-style reuse for bounded golden corpora. - Enforce ACLs before retrieval and before cache construction. - Record provenance IDs in traces for both retrieval and cache attachment. - Evaluate grounding quality continuously, not just retrieval recall.

Agentic execution, security and operations phases¶

Agentic workflows and context engineering¶

Phase 6 is where the platform shifts from “answer generation” to “goal-directed work”. The main problem is not only tool orchestration; it is context discipline. DORA’s research shows that AI increases throughput but also increases downstream verification pressure and instability when the surrounding platform is weak. Long-context research similarly shows that simply carrying more context is not enough. This is why this reference architecture uses CDAD as an operating discipline: durable knowledge lives outside the active prompt, the active prompt is built from a minimal task packet, every step has explicit acceptance criteria, and execution traces are compacted after use.

Responsibilities. Application teams own workflow goals, task packets, and human approval rules. Platform teams own agent runtime, memory stores, session handling, prompt registry integration, and tool contracts. Security owns privileged-action boundaries and tool allowlists. SRE owns end-to-end traceability and quality regression detection.

Required components. The minimum viable agent platform includes: orchestrator, prompt registry, tool registry, compact state store, trace store, approval service, and sandboxed execution path. Prompt versioning and trace-linked lineage are essential for operational repeatability and post-incident review.

Data flow and interfaces. A user goal becomes a task packet with scope, acceptance criteria, risk class, context references, and tool permissions. The agent loads only the packet and referenced durable artefacts, performs a step, stores structured evidence, and either concludes or emits a new packet. This approach shrinks active context, improves reproducibility, and makes approval boundaries explicit. It is also substantially easier to budget than unconstrained “chat-with-memory” loops.

Security controls. Every tool call crosses a trust boundary. Input sanitisation, output classification, scope limiting, and human approval for risky actions must therefore exist at the packet and tool-contract level, not just in the system prompt. NCSC guidance specifically recommends threat modelling, input checks and sanitisation, restrictions on AI-triggered actions, least privilege, and secure defaults.

SRE and SLO implications. Agent SLIs should include task success rate, tool success rate, human-escalation rate, retry depth, loop-detection count, context-compaction ratio, and cost per successful task. Traditional p95 latency alone is not enough because a slow but successful four-step workflow may be better than a fast but failed one-step attempt.

Observability and cost attribution. Agent traces should include the user goal, packet ID, prompt version, model choice, retrieved context IDs, tool inputs and outputs, approval decisions, and final outcome. MLflow tracing is well aligned with this because it captures intermediate-step inputs, outputs, metadata, latency, token usage, feedback, and evaluation hooks.

Failure modes and mitigations. Common failures are context bloat, repeated retrieval, recursive loops, hidden scope expansion, and unverified tool outputs being treated as truth. Mitigate with packet TTLs, retry caps, loop breakers, output schema validation, and approval triggers for privilege, externally visible changes, or risky side effects.

Operational checklist. - Turn every non-trivial task into an explicit packet with scope and acceptance criteria. - Store durable knowledge outside the active prompt. - Compact traces and tool outputs after each step. - Define approval triggers before deployment. - Cap retries, tool calls, and reasoning depth per packet class.

DevSecOps and the agentic security boundary¶

Phase 7 creates the hard safety boundary around agent execution and AI supply chains. The relevant governance basis is the NIST AI RMF, which makes trustworthy AI a combination of governance, measurement, management, and explicit life-cycle controls; the NCSC secure AI guidance, which structures secure design, development, deployment, and operation; and CISA’s Secure by Design approach. Together they imply that AI security is not a moderation feature. It is a software and systems engineering discipline.

Execution isolation. For privileged tool use, code execution, and file/system mutation, this reference design recommends microVM or equivalent hardware-virtualised isolation rather than ordinary shared-kernel containers. Amazon Web Services Firecracker project docs describe Firecracker as purpose-built for secure, multi-tenant services, with a minimal device model, microVM creation rates up to 150 per second, startup in as little as 125 ms, and memory overhead below 5 MiB; Kata Containers provides a second layer of defence through hardware virtualisation over traditional containers. That does not make either option “safe by default”; it means the platform has a more defensible starting point for untrusted workloads.

Gateway and inspection layer. Every request should pass through an AI gateway that centralises model auth, logging, budgets, routing, and guardrails. MLflow AI Gateway provides a good reference capability set with centralised security, routing, usage tracking, budget controls, and fallbacks, while a specialised transparent proxy such as LLMTrace documentation can add request/response inspection, prompt-injection detection, PII scanning, latency and TTFT metrics, and cost accounting. Use project-specific tools like LLMTrace as optional examples, not as normative standards; they should be admitted only after your own security and performance evaluation.

Deterministic verification. The decisive design rule is that agents may suggest, but deterministic systems must decide before execution when the action changes state or touches control boundaries. That means schema validation, static analysis, test execution, policy-as-code, and signed promotion gates. This is aligned with NIST’s TEVV emphasis, NCSC’s secure-development guidance, SLSA provenance, CycloneDX SBOM, and OPA policy enforcement.

Tenant isolation, identity, secrets. Use workload identity, short-lived credentials, secret rotation, and attribute-based authorisation. Service identities should be bound to tenant, environment, and workload purpose; secrets should never be embedded in prompts; and high-risk tools should require gateway-minted, scoped credentials at execution time. SPIFFE/SPIRE, ABAC, and NIST key-management guidance fit this architecture well.

Red-teaming. Red-teaming should cover model behaviour, tool execution, agent hijacking, workflow drift, retrieval poisoning, and supply-chain compromise. NIST defines AI red-teaming as a structured testing effort to find flaws and vulnerabilities in an AI system. NIST’s recent agent-hijacking work showed attack success rates rising sharply when novel attacks were developed against agents, which is a strong argument for scheduled adversarial testing rather than assumption-driven controls. MITRE ATLAS should be used as the common attack-language baseline.

SRE and operational implications. Security gates need SLOs too: policy decision latency, approval-service availability, sandbox startup latency, false-positive rate for gateway blocks, and deterministic verification pass rate. A security plane that is too slow or too noisy will be bypassed in practice.

Failure modes and mitigations. Main risks: prompt injection, tool misuse, unsafe dependency changes, hidden scope expansion, model poisoning via external artefacts, and leakage through traces. Mitigate with sandboxing, output verification, allowlists, provenance, PII redaction in traces, and periodic external red-team review.

Operational checklist. - Run risky tool execution inside microVM-backed or equivalent hardware-isolated workers. - Pass all model traffic through a gateway that enforces auth, routing, budgets, and logging. - Verify all state-changing outputs deterministically before execution. - Maintain SBOM and provenance for models, prompts, dependencies, and deployment images. - Schedule red-teaming against agents, not only against base models.

SRE, observability and telemetry¶

Phase 8 is the operating discipline that keeps the platform reliable and debuggable. Google’s SRE guidance still applies, but the useful signals shift. Burn-rate alerting, canarying, rollback, and release hygiene remain core, but AI systems also need request-level traceability across prompts, retrieval, tools, model versions, policy decisions, and costs. OpenTelemetry and MLflow Tracing are the strongest practical foundation because they support correlation across traces, metrics, logs, and GenAI-specific metadata.

Responsibilities. SRE owns SLOs, error budgets, alerting, canary policy, rollbacks, and incident review. Platform teams own semantic instrumentation and trace propagation. AI teams own quality SLIs, eval datasets, and response-quality regression detection. FinOps owns cost telemetry and business attribution.

Core SLIs. Recommended platform-wide indicators are: availability; p95 and p99 TTFT; p95 ITL; structured-output conformance; retrieval support rate; cache hit rate; tool success rate; policy-denial rate; prompt-compression ratio; manual-escalation rate; and cost per successful task. MLflow Tracing explicitly supports intermediate-step metadata, latency and token usage, human feedback, and evaluation inspection; OpenTelemetry provides the common signal model and resource correlation model.

Error-budget burn. Burn-rate alerting is better than component-threshold alerting because it asks whether the service is consuming reliability too quickly relative to its allowed budget, rather than whether one host metric crossed an arbitrary line. Use at least a fast window and a slow window, and make automatic rollback available for deployments that breach both.

Release engineering. Every model, prompt, router, and policy change should be canaried. New retrieval indexes and prompt sets are deployment artefacts and should be rolled out the same way as code. The rollout controller must be able to abort on latency, quality, or safety regressions, not only on HTTP error spikes.

Data retention and audit. Traces are highly valuable for debugging and cost analysis but can become a security and privacy liability if retained indiscriminately. Sample aggressively in low-risk traffic, retain full fidelity for policy or incident classes, redact PII where possible, and separate operational retention policy from legal-hold policy. MLflow supports PII redaction in traces and async logging for production use.

Failure modes and mitigations. Typical failures are missing trace context across services, overwhelming trace volume, poor semantic conventions, and teams measuring technical latency without measuring answer correctness. Mitigate with mandatory instrumentation standards, adaptive sampling, eval-linked traces, and SLO reviews that include quality and cost, not only infra health.

Operational checklist. - Instrument every request with trace, cost, model, prompt, policy, and tenant metadata. - Alert on error-budget burn and quality regression, not only infrastructure thresholds. - Canary model, prompt, retrieval, and policy changes separately. - Redact sensitive fields before long-term retention. - Tie incidents to trace IDs, rollout IDs, and budget ownership.

AI FinOps and value governance¶

Phase 9 is a cross-cutting governance plane, but it is also an explicit phase because enterprise AI programmes fail when value governance arrives too late. The FinOps Foundation’s AI guidance pushes teams toward training efficiency, inference efficiency, token-consumption efficiency, compliance effectiveness, and ROI templates; DORA pushes the same agenda from the software-delivery side by showing that AI is an amplifier, that verification burdens are real, and that throughput gains can be offset by downstream instability. This report therefore recommends governing AI with a tokens → intelligence → value model.

Purpose. Make AI spend attributable, forecastable, optimisable, and governable at the level of products, workflows, teams, and business outcomes. The goal is not to meter tokens for their own sake; it is to understand whether tokens, model calls, GPU minutes, retrieval operations, and human review time produced verified outcomes.

Responsibilities. Finance and the AI investment council own funding models and value templates. Product and engineering own outcome hypotheses and workflow ownership. Platform owns telemetry and chargeback/showback plumbing. Security and risk own approval gates for risky spend or risk-bearing workflows. Procurement owns vendor and entitlement review. The FinOps Foundation explicitly highlights closer collaboration with procurement, security, and leadership for AI scopes.

Required components. Cost and usage ingestion, per-request attribution, budget policies, model-routing policy, approval rules, vendor catalogue, showback or chargeback views, and a value taxonomy that links workloads to outcomes. MLflow AI Gateway’s usage tracking and budget controls are useful operational handles, but they only become a governance plane once joined to product and SRE data.

Data flow and interfaces. Every request and every workflow step must emit cost events with: tenant, product, workflow, model, adapter, knowledge path, tokens, latency, outcome status, and trace ID. These events then join with business KPIs, release metrics, review time, and incident data to produce unit economics. That is the point at which AI FinOps becomes more than invoice interpretation.

Security and approval controls. Approval gates are economic controls as well as security controls. Require approval for public API changes, new dependencies, destructive actions, schema migrations, external network calls, privilege changes, or scope expansion beyond the task packet. That recommendation is supported by both secure-AI guidance and DORA’s message that local speed gains can be lost to downstream chaos when controls are weak.

SRE and SLO implications. AI FinOps and SRE are inseparable. If AI increases deployment volume but also raises change failure rate and recovery burden, the value case weakens materially. DORA’s framing is especially useful here because it explicitly connects throughput and instability rather than treating them as separate concerns.

Recommended formulas. The formulas below are proposed operational metrics for this reference architecture. They are design recommendations, not industry-standard accounting rules, but they are grounded in FinOps and DORA measurement logic.

- Tokens per verified outcome =

total input + output + tool + retrieval tokens / count(verified outcomes) - Cost per verified change =

(model spend + GPU/runtime spend + retrieval spend + apportioned review hours + apportioned incident/rework cost) / count(changes released and verified) - Verification tax ratio =

(review hours + rework hours + failed-eval remediation hours) / AI-assisted delivery hours - Cost per successful agent task =

(all AI + infra + human escalation cost for task class) / count(tasks completed within policy and quality bounds) - Input-token share of total spend =

input token cost / total AI runtime cost - Instability tax =

post-AI failure cost – baseline failure cost - Saved prefill value =

reused prefix tokens × marginal prefill cost per token

Failure modes and mitigations. The largest economic losses come from waste patterns: oversized models for simple work, repeated retrieval, recursive loops, abandoned runs, excessive trace retention, and generated change volume that produces more review burden than business value. The remedy is architectural, not just financial: routing, packet discipline, cache reuse, deterministic automation first, and approval boundaries.

Operational checklist. - Give every workload an owner, budget, and business outcome hypothesis. - Route by value and risk, not only by capability. - Report cost per verified outcome as a standard metric. - Model review burden and instability cost as part of total AI cost. - Use spend controls inside gateways, CI/CD, and agent runtimes rather than only in monthly reporting.

Cross-cutting governance and implementation standards¶

A production-ready enterprise architecture needs a small number of enterprise standards that apply across every phase.

Model registry and release control. Maintain a model registry with immutable versions, mutable aliases, deployment metadata, and promotion predicates. Mirror this pattern for prompt versions and tool contracts. Registry records should include safety checks, eval scores, data lineage references, and release status. MLflow’s model and prompt registries map cleanly to this need.

Data governance and lineage. Adopt a lineage standard that can connect datasets, jobs, runs, model versions, and deployments. OpenLineage gives a good common model for dataset, job, and run entities, while MLflow dataset tracking gives practical lineage from source to model predictions. The architectural rule should be simple: no untracked dataset may influence a promoted model or a production retrieval corpus.

Prompt and tool registry. Prompts, system instructions, tool schemas, and approval policies are all deployable artefacts. They require versioning, aliases, rollback, and ownership in the same manner as code. MLflow’s prompt registry explicitly supports versioning, immutability, lineage, and rollbacks.

Tenant isolation. Enforce tenant boundaries at identity, routing, cache, retrieval, and trace levels. Shared models do not imply shared caches, shared retrieval indexes, or shared traces. Use workload identity and ABAC-style rules so that authorisation decisions can include tenant, environment, action, model class, and data sensitivity.

Secrets and identity. Use short-lived workload credentials, not static embedded keys. Bind secrets to runtime execution scope and rotate automatically. Key-management guidance from NIST is the appropriate baseline for secret governance.

Release engineering. Model, prompt, retrieval, policy, and gateway changes all need canaries and rollbacks. A “safe prompt deployment” and a “safe model deployment” are both release-engineering problems. Google’s SRE canary guidance remains the most practical general reference for this.

Disaster recovery. Define RTO and RPO for every plane separately: the serving plane, control plane, cache plane, registry plane, and audit plane. A practical rule is that the control plane should usually have stricter RTO than the training plane; the audit plane needs stronger durability than the warm cache plane. Google’s DR planning guidance is explicit that smaller RTO/RPO targets cost more and should be tied to business impact.

Explainability, audit and compliance. Use the NIST AI RMF as the minimum governance baseline for trustworthy characteristics including security, transparency, explainability, privacy, and risk management. For broader management-system governance, ISO/IEC 42001 is the most relevant enterprise management-system standard in this space. For content provenance and evidence chains, C2PA-style provenance standards are relevant for generated media and signed disclosures.

Procurement and vendor governance. AI procurement is not just rate negotiation. It includes model licences, data rights, auditability, export and residency requirements, entitlements, security posture, and vendor viability. The FinOps Foundation’s AI guidance explicitly highlights coordination with procurement and the growing number of AI vendors as a material operating concern. NCSC guidance adds due diligence for external model providers and third-party libraries.

Sustainability and energy accounting. For energy and carbon accounting, the most defensible approach is to combine financial telemetry with a recognised software carbon methodology. The Green Software Foundation’s SCI methodology and the FinOps Foundation’s sustainability capability provide a workable basis; Cloud Carbon Footprint and cloud-provider carbon reporting can support implementation. Treat this as another unit-economics dimension, not as a separate ESG afterthought.

Operational artefacts¶

The following snippets are intentionally generic. They show the kind of policy gates the architecture needs, without prescribing a particular policy engine or IaC product. The controls themselves are supported by the NIST AI RMF, NCSC secure AI guidance, OPA policy-as-code, canary release practice, and FinOps AI governance.

Promotion gate for model and prompt release

policy: release_promotion

applies_to:

- model_version

- prompt_version

require:

- dataset_lineage_present: true

- offline_eval_pass: true

- structured_output_conformance >= 0.99

- safety_eval_pass: true

- regression_vs_current <= allowed_delta

- sbom_attached: true

- provenance_attestation_present: true

deny_if:

- unresolved_high_severity_findings: true

- pii_redaction_test_fail: true

- tenant_boundary_test_fail: true

action:

on_pass: promote_to_staging

on_fail: block_release

Agent execution guardrail

policy: agent_execution

defaults:

network_egress: deny

file_write_scope: task_workspace_only

max_tool_calls: 8

max_retry_depth: 2

max_reasoning_budget_tokens: 12000

require_approval_for:

- new_dependency

- schema_change

- privilege_change

- public_api_change

- destructive_operation

- external_network_call

- scope_expansion

verifiers:

- schema_validator

- static_analysis

- unit_tests

- policy_engine

fallback:

on_verifier_fail: escalate_to_human

Budget and routing policy

policy: ai_routing_and_budget

match:

task_class: deterministic

route_to: rules_or_search

else_if:

task_class: faq_stable

route_to: cached_context_model

else_if:

task_class: retrieval_fresh

route_to: mid_tier_rag_model

else_if:

task_class: complex_planning

route_to: high_capability_model

limits:

daily_budget_by_workload: required

per_request_token_cap: required

per_task_cost_cap: required

actions:

on_budget_breach:

- downgrade_model

- reduce_context

- require_human_approval

Suggested SLOs

| Domain | Example SLO | Why it matters |

|---|---|---|

| Serving | 99.95% successful responses for critical tier | Base availability target |

| Latency | p95 TTFT under class-specific objective | Separates prefill pain from decode pain |

| Quality | Structured-output conformance ≥99% on governed endpoints | Prevents silent parser breakage |

| Agentic execution | Successful task completion within policy bounds ≥95% for approved task class | Business-facing reliability indicator |

| Security | 0 unauthorised privileged executions | Hard safety goal |

| Governance | 100% of promoted models and prompts carry lineage and evaluation metadata | Auditability |

| FinOps | 100% of requests attributable to owner, product, model, and workflow | Enables unit economics |

These SLOs are consistent with SRE practice, NIST TEVV expectations, and MLflow/OpenTelemetry observability capabilities, but the exact target values must be calibrated to business criticality.

Roadmap and open questions¶

The roadmap below assumes a starting point of fragmented experimentation and aims for an enterprise-grade platform inside roughly four programme waves. The ordering follows DORA’s J-curve insight: first stabilise the operating substrate, then scale autonomy, then optimise value and sustainability.

gantt

title Enterprise AI architecture roadmap

dateFormat YYYY-MM-DD

section Foundations

Registry, lineage, identity, baseline tracing :a1, 2026-06-01, 70d

Gateway, routing, budget tagging :a2, 2026-06-15, 75d

section Serving and knowledge

vLLM and Ray production serving :b1, 2026-08-01, 90d

RAG baseline and prompt registry :b2, 2026-08-15, 75d

section Memory and agent execution

KV cache offload and cache-aware routing :c1, 2026-10-01, 80d

Sandboxed agent runtime and approval service :c2, 2026-10-15, 90d

section Optimisation and governance

FinOps unit economics and value council :d1, 2026-12-01, 75d

Sustainability accounting and vendor governance :d2, 2027-01-15, 60dA practical milestone plan is as follows.

| Milestone | Primary owner | Indicative effort | Exit criteria |

|---|---|---|---|

| Control-plane foundation: registry, lineage, tracing, identity | Platform + security | Large | Every deployment is versioned, attributable, and traceable |

| Production serving baseline: gateway, routing, vLLM, Ray, canaries | Platform + SRE | Large | Stable tiered serving with rollback and SLOs |

| Knowledge plane baseline: hybrid retrieval + prompt registry | Data/AI platform | Medium | Source-attributed answers with measurable grounding quality |

| Cache plane: offload, prefix-aware routing, LMCache-style tiering | AI infra + SRE | Medium to large | Measured reduction in TTFT or cost for prefix-heavy workloads |

| Agent execution boundary: packets, approvals, sandboxing, deterministic checks | App engineering + security | Large | No privileged autonomous execution without policy and verifier coverage |

| Value governance plane: showback, unit economics, routing controls | FinOps + product + platform | Medium | Cost per verified outcome available by workload |

| Sustainability and vendor governance | FinOps + procurement + compliance | Medium | Carbon and vendor-risk views attached to major AI workloads |

Open questions and limitations. Two areas remain less standardised than the rest of the stack. First, token-level alignment methods such as RTO are promising but not yet as operationally standardised as LoRA/QLoRA + DPO-style pipelines; treat them as experimental unless your evaluation evidence is strong. Second, CAG-style knowledge patterns are highly effective for some bounded corpora, but refresh strategy, cache invalidation, and legal separation need sharper internal standards before broad enterprise reuse. Finally, specific gateway tools such as LLMTrace can be valuable, but they should be adopted only after internal security and performance review because they are product choices, not regulatory standards.